Hugging Face Dataset Guide¶

Hugging Face offers numerous methods for interacting with and creating datasets. This page provides a basic overview with some recommendations specifically targeting image dataset uploads, though the principles are transferrable to other data types. We list these options—in order of increasing complexity—with some guidance, recommendations, and links out to the appropriate parts of the Hugging Face docs for the most up-to-date information available.

- Web interface (UI): For smaller, simpler uploads.

- Hugging Face Command Line Interface (CLI): For most use-cases, easy access from cluster.

- Hugging Face API (python package): For when more fine-grained control than is achievable with the CLI is needed.

- Git/Git LFS: Main use-case is when multiple PRs lead to merge conflicts—Hugging Face provides no other means for resolution.

Info

Some sections of the Hugging Face docs, such as for the huggingface_hub, have only version specific links for stable versions. In this case, if the link directs to an older version, there will be a banner to alert you to a newer version available, so keep an eye out for that updated version banner.

Most of the content below is covered in various parts of Hugging Face's Upload Guide; this page is provided as a summary reference mainly to determine which method might be best and link to the appropriate docs. Additionally, we include an integrity check to help you ensure that your repo contains all the desired files after uploading through any of these methods.

HF tips and tricks for large uploads.

Note on Authentication¶

All of these methods require authentication to edit datasets, ranging from passwords, to tokens, to SSH authentication, and all support editing Public (accessible to anyone on the internet) or Private (accessible only to members of the organization) repos. Two key notes on authentication:

- Private repositories are only visible if you are authenticated.

- If using tokens for access, be sure to create a fine-grained token, specifically for your needs.

Upload a Dataset with the Web Interface¶

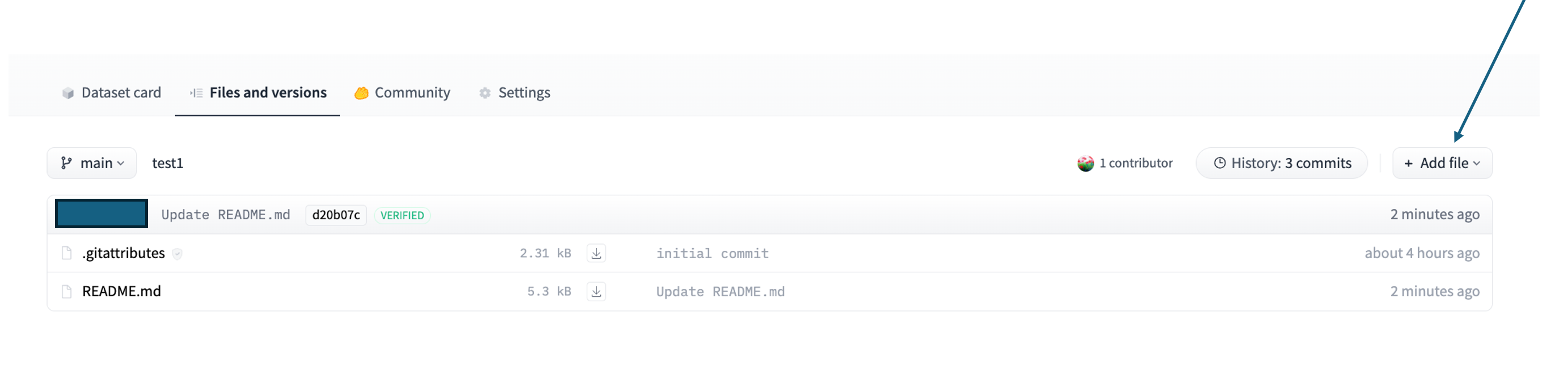

In the Files and Versions tab of the repository, you can select "Contribute" to add or create files or start a pull request directly from the web interface.

This method is fine for smaller files (<100MB), or data uploads from distributed sources, that have a relatively flat structure with few directories, and/or have few files. If you are uploading existing files, navigate to the target folder first.

Upload a Dataset with the Hugging Face CLI¶

Hugging Face provides a comprehensive Command Line Interface (CLI) and corresponding docs. Note that this is installed with the huggingface_hub python package, but can also be installed directly, then called with hf <command>.

The Hugging Face CLI is the ideal method for uploads that are large in volume, have more than a few files, and/or a folder structure with many or nested directories. It works directly from HPC clusters, such as OSC. Under the hood, hf upload uses the same upload functions described below, under Upload a Dataset with HfApi. Review Hugging Face's guidance on large folder uploads before selecting a method for uploading large folders to a non-empty repository.

When uploading to a dataset, note that the repo type must be specified (--repo-type=dataset); this is also the case for spaces, since Hugging Face treats models as the default.

There are specific hf datasets and hf repo commands for more general queries and repo initialization.

Upload a Dataset with HfApi¶

When more complex dataset structures are involved or more fine-grained control (not exposed on CLI) over how a repo will be organized on Hugging Face is neeeded, the Hugging Face API may be the answer. For instance, if a glob pattern cannot sufficiently clarify necessary exclusions of subfolders or files, HfApi is likely the preferred choice. This is a class, accessible through the huggingface_hub package, that acts as a Python wrapper for the API.

Please see the Hugging Face API docs for the most up-to-date guidance. For quick reference, to upload by file or upload by folder (structure maintained).

Repos can also be created through the Hugging Face API using the create_repo method with the following parameters:

See also instructions using the datasets package.

Upload a Dataset with Git¶

Using Git to interact with Hugging Face requires installation of Git LFS, the Hugging Face CLI, and then enabling large file upload for the repo

Hugging Face provides details on git vs http, really using Git vs HfApi

Hugging Face has moved away from Git LFS, instead utilizing Xet for data storage and version control; this is backwards compatible with LFS.

Other Repo Considerations¶

One time where git may be needed, is if one encounters a merge conflict. Unlike GitHub, Hugging Face does not have conflict resolution UI tools, nor does it provide merge conflict resolution capabilities in the CLI or HfApi. The only means for resolving merge conflicts is to manually update the pull request in a local clone, pulling main into your PR branch and resolving the conflicts.

Integrity Check¶

Sometimes uploads fail partway through, leaving one or more files un-uploaded. Unfortunately, it seems that there is not an easy solution to be alerted to these issues when not uploading through the UI. Additionally, using a glob pattern to set upload without a dry-run1 (in git terms, this would be running git status after adding files) can also lead to accidental exclusion. To catch these issues, we recommend the following integrity check after uploading a dataset1.

import pandas as pd

from huggingface_hub import HfApi

api = HfApi()

repo_id = "<org-name>/<repo-name>"

repo_type = "dataset"

file_list = api.list_repo_files(repo_id=repo_id, repo_type=repo_type)

file_df = pd.DataFrame(data = {"filepath": file_list})

metadata = pd.read_csv("path/to/metadata/file")

# assuming you use the same filepath in your system as in the repo

df = pd.merge(file_df, metadata, how = "inner", on = "filepath")

df.shape[0] # this should match the number of expected images

Pro tip

If you don't have a metadata file for your images, use the sum-buddy package to generate one in your local file system. This can also be used as a metadata file for the dataset viewer as needed (see image datasets docs for more information on setting this up). Similar options are available for audio and video datasets.

-

The Hugging Face CLI does have a dry-run mode for downloading datasets. Additionally, if working with Git LFS, there is a preupload LFS option to ensure all files are properly preset and organized before committing. There are additional considerations for sharding noted in the Hugging Face docs. ↩↩